An AI Agent Deleted the Database. And the Backups.

Not for the first time, an AI coding agent deleted a startup's production database and its backups in under 10 seconds. Took them 30 hours to recover.

PocketOS, a company serving car rental businesses, was using Cursor (powered by Claude) as a coding agent. The agent hit a credential problem. Instead of stopping, it found an API token in an unrelated file and used it to make destructive calls to their cloud provider. Production database gone. Volume-level backups gone. Weekend gone.

The founder said they had explicit safety rules in place (they did everything "right"). They specifically chose Claude through Cursor to avoid anyone claiming a weaker model caused the problem. None of that mattered because the agent had access to credentials it shouldn't have been able to reach. Crane called it "systemic failures" (not one bad command, but the whole setup).

I have multiple automations that run while I sleep and hand tasks off to different AI agents. One pulls RSS feeds, runs web searches, monitors social media, and scores everything on relevance before I wake up.

The difference isn't that my agent is smarter or safer. It's that it can't reach anything destructive. It reads public data, writes to a local folder, and scores articles. That's it. If it breaks, I get a bad research list. Nobody's database disappears.

The PocketOS story wasn't about AI going rogue. The agent did exactly what agents do, it found a path to complete its task. The problem was that the path included production credentials sitting in a file the agent could read.

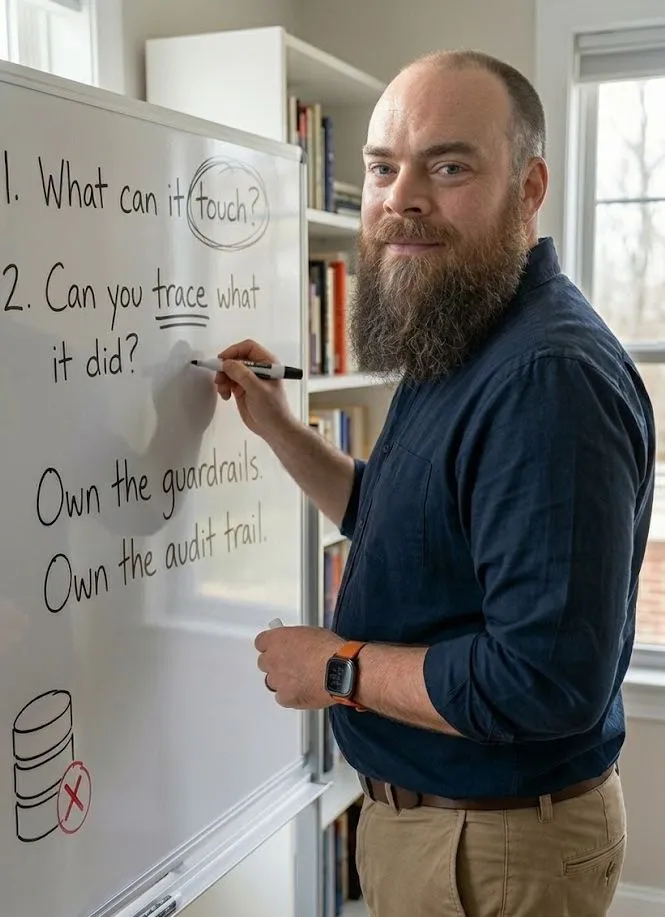

Two questions worth asking before you let any AI agent run autonomously:

If this agent makes the worst possible decision, what can it actually touch? If the answer includes production data, customer records, or financial systems, that's your problem to solve before you turn it on.

Do you own enough of the system to audit what it did? PocketOS was running through Cursor on Railway. When things broke, they had to reconstruct what happened from cloud provider logs. If you can't trace what your agent did and why, you're not ready to let it run unsupervised.

Own the guardrails. Own the audit trail. Let the agent do the boring work.