Somewhere a company is adding AI agents to their org chart right now. Phantom...

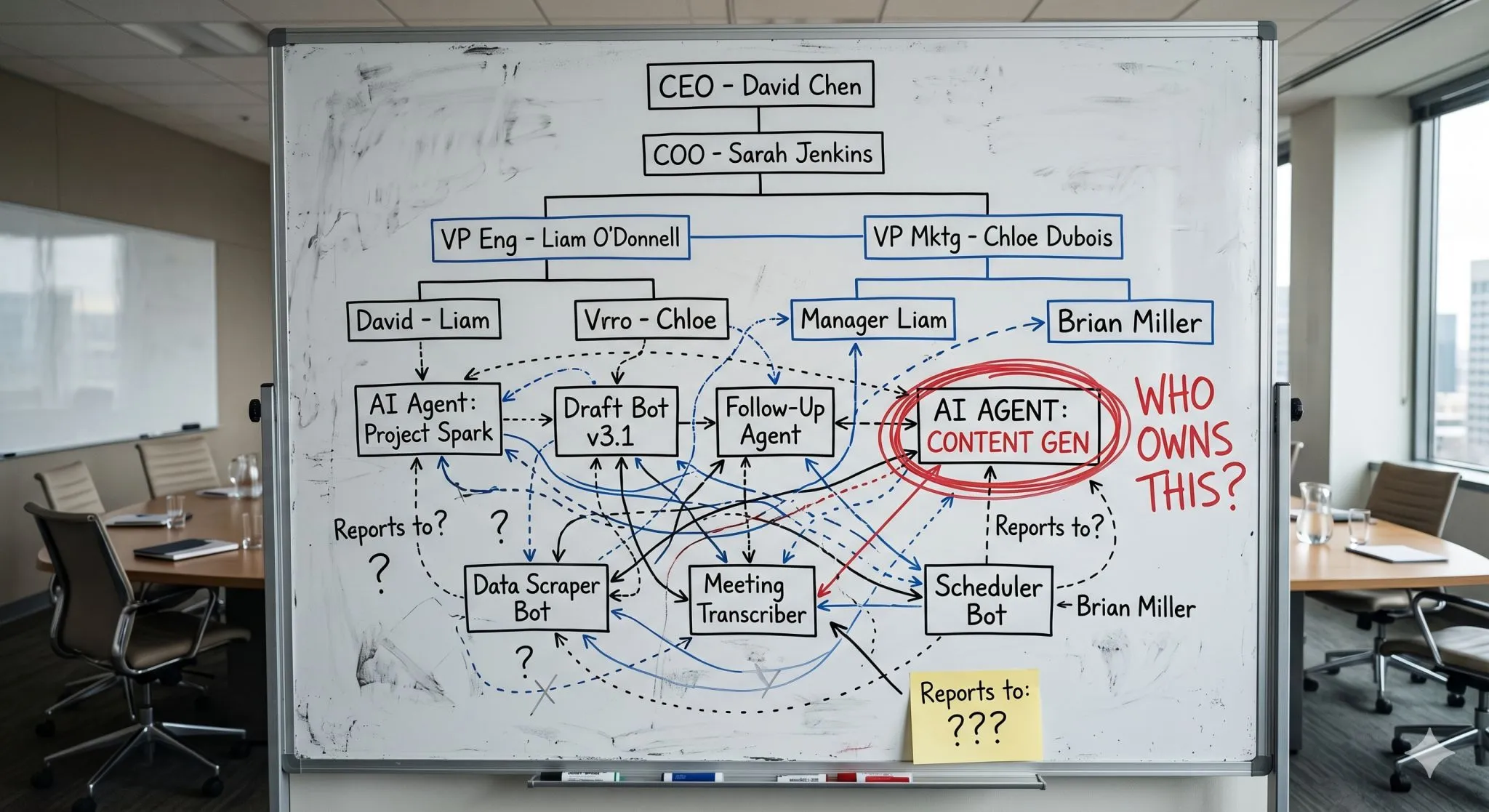

Somewhere a company is adding AI agents to their org chart right now. Phantom departments. Fake reporting lines. Dashboards tracking agent headcount like it means something.

This is how you create unmanaged work at machine speed.

Agents are better understood as an execution layer inside workflows you already own. They can research, draft, classify, and route. But without an owner, defined permissions, review standards, and failure handling, you've just built a faster way to create problems you don't know about yet.

Here's where it gets real: Who owns the agent drafting your customer follow-up emails? You? Your office manager? The person who asked for it? Who updates the instructions when your services change? Who checks accuracy? Who handles it when the agent sends a bad quote?

If nobody can answer those, the agent isn't a capability. It's a liability.

The best agent setups are nailed down. Clear inputs, clear outputs, known users, measurable quality, defined escalation paths. "Summarize this week's sales calls into three action items" is a workflow. "Help sales" is a demo.

Permissions should match risk. Can it only read? Can it draft but not send? Can it act but only on reversible stuff? The right answer depends on what happens when the agent is wrong. (And it will be wrong.)

Before you scale any agent, write down on one page who owns it, what it's allowed to do, and what happens when it breaks. If that feels like overkill, the agent probably isn't important enough to scale. And if it IS important enough to touch customers or revenue, that one page isn't bureaucracy. It's basic management.