Stripe built 500 tools and 3 million automated tests before they let AI agent...

Stripe built 500 tools and 3 million automated tests before they let AI agents ship code. You downloaded a plugin and called it a strategy.

That's not a dig. It's the gap that kills 90% of AI agent projects before they ever get past the pilot phase.

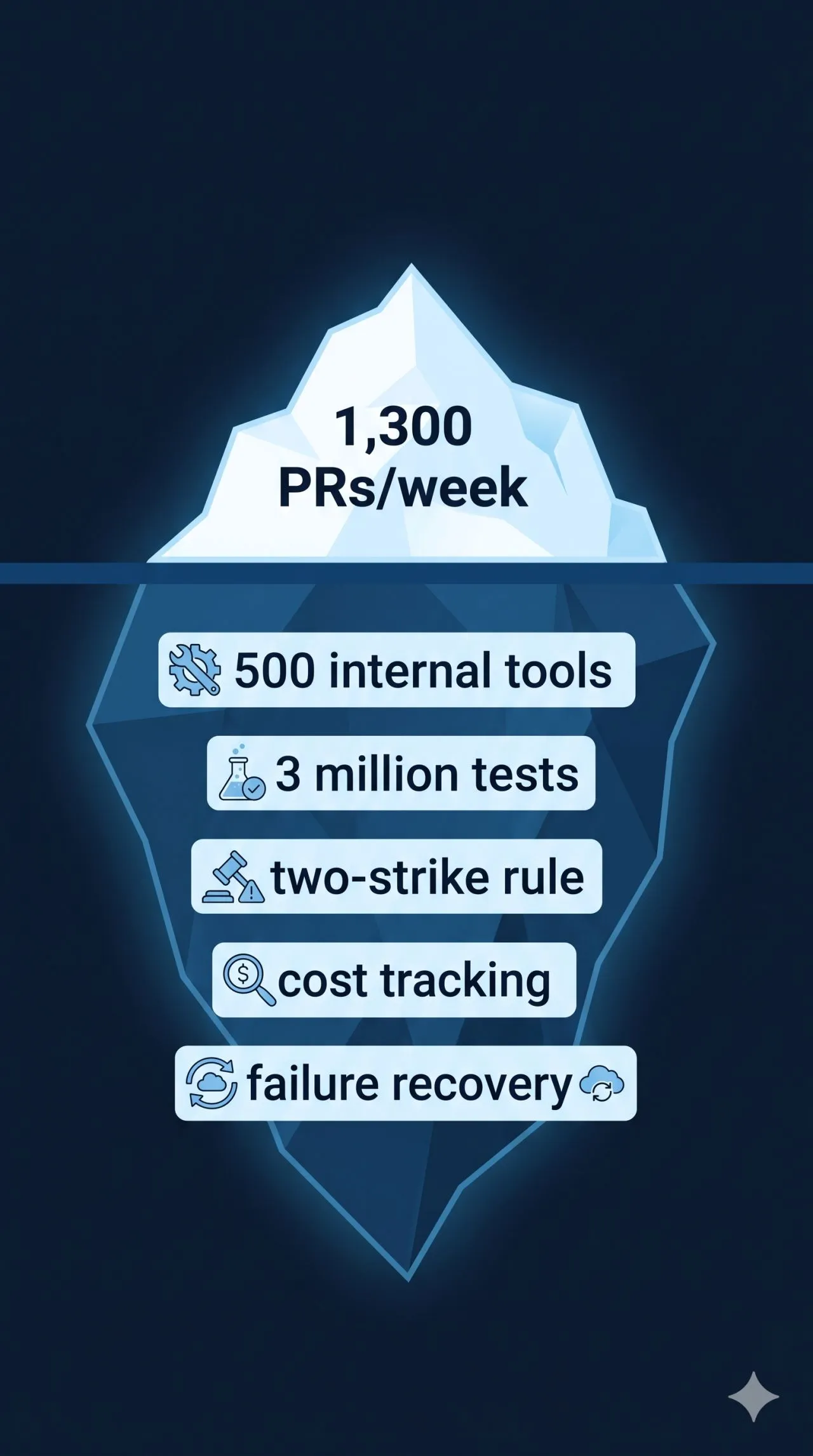

Stripe's agents (they call them Minions) are shipping 1,300 code changes a week now. No human writes a line. Ramp says 30% of their shipped changes come from agents. Spotify's running 650+ a month. This isn't experimental anymore.

But look at what Stripe actually built to get there. 500 internal tools. 3 million automated tests with selective execution per task. A two-strike rule (if the agent can't fix its own mistakes in two tries, it hands the job back to a person). Pre-warmed environments that spin up in 10 seconds. A hybrid system that mixes deterministic steps with AI decision-making so the critical parts never depend on the model getting creative.

Most teams skip all of it. Costs spiral because nobody's tracking what the AI spends per task (one complex job can cost 10x a simple one). Things break when an agent gets interrupted mid-task and nobody planned for how it picks back up. And the AI will occasionally just make up a command that doesn't exist (ask me how I know).

The lesson is annoyingly simple: start with one thing. Make sure it works 95% of the time. Then add the next thing. Each layer doubles the complexity (and they compound fast).

If you're evaluating AI agents for your business, ask one question: what happens when it fails halfway through a task? If nobody has an answer, you're not ready to go live. You're ready to test.